Fairness and Information

Southern information theorists after the civil war realized that although they could no longer exclude former slaves from the polls, they could exclude people based on other criteria like, say, education, and that these criteria happen to be highly correlated with formerly being a slave who was not allowed education. Republican information theorists continue to exploit this observation in ugly ways to exclude voters, like disenfranchising voters without street addresses (many Native Americans).

In the era of big data, these deplorable types of discrimination have become more insidious. Algorithms determine things like what you see on your Facebook feed or whether you are approved for a home loan. Although a loan approval can’t be explicitly based on race, it might depend on your zip code which may be highly correlated for historical reasons.

This leads to an interesting mathematical question: can we design algorithms that are good at predicting things like whether you are a promising applicant for a home loan without being discriminatory? This type of question is the heart of the emerging field of “fair representation learning”.

This is effectively an information theory question. We want to know if our data contains information about the thing that we would like to predict, but that is not informative about some protected variable. The contribution of a great PhD student in my group, Daniel Moyer, to the growing field of fair representation learning was to come up with an explicit and direct information-theoretic characterization of this problem. His results will appear as a paper at NIPS this year.

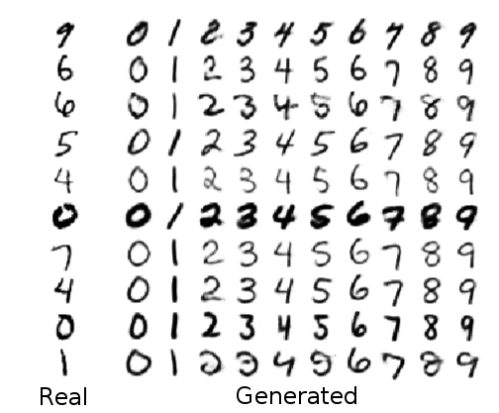

He showed that an information-theoretic approach could be more effective with less effort than previous approaches which rely on an adversary to test whether any protected information has leaked through. He also showed that you can use this approach in other fun ways. For instance, you can imagine counter-factual answers like what the data would look like if we changed just the protected variable and nothing else. As a concrete visual example, you can imagine that our “protected variable” is the digit in a handwritten picture. Now our neural net learns to represent the image, but without knowing which specific digit was written. Then we can run the neural net in reverse to reconstruct an image that looks similar to the original stylistically but with any value of the digit that we choose.

Fig. 3 from our paper.

Going back to the original motivation about fairness, even though we can define it in this information-theoretic way it’s not clear that this fits a human conception of fairness in all scenarios. Formulating fairness in a way that meets societal goals for different situations and is quantifiable is an ongoing problem in this interesting new field.

Filed under: Posted by Greg Ver Steeg | 1 Comment

That’s an impressive result. If I’m not mistaken, this is equivalent to the problem of controlling for confounding variables in statistics, which always seemed to me like an impossible task.

On the socio-economic side, I’m afraid that (a) as you note, the task of defining fairness is fraught (b) the technique won’t spontaneously be adopted, due to vested interests in preserving the appearance of fairness without actually being fair.

Suppose we’re talking about job applications to an equal-opportunity employer. Imagine that employee effectiveness is correlated with age. Does this imply that employee effectiveness cannot be used as a hiring criteria because it is correlated with age?

Obviously this isn’t the case, but I think there is a valid concern about ‘over-reach:’ if we don’t have tolerances on the amount of ‘leakage’ of protected information, instead requiring perfection in anonymizing these variables, we might sacrifice a significant amount of fair-game information as a side-effect. Think about the Wyner common information: in order to capture *all* the common entropy between two variables, you need a variable that is lower-bounded by the Shannon mutual information, but in principle could be up to the size of either variable even for arbitrarily small mutual information.

This is a worse-case scenario, but it’d be interesting to look into the cost/benefit trade-off between preserving useful information and eliminating unfair information. My hunch is that asymptotically approaching zero leakage is going to start eliminating a disproportionately large amount of fair information to remove a small amount of unfair information, and society will have to come to terms with that trade-off.